Organizations are experiencing a proliferation of data.

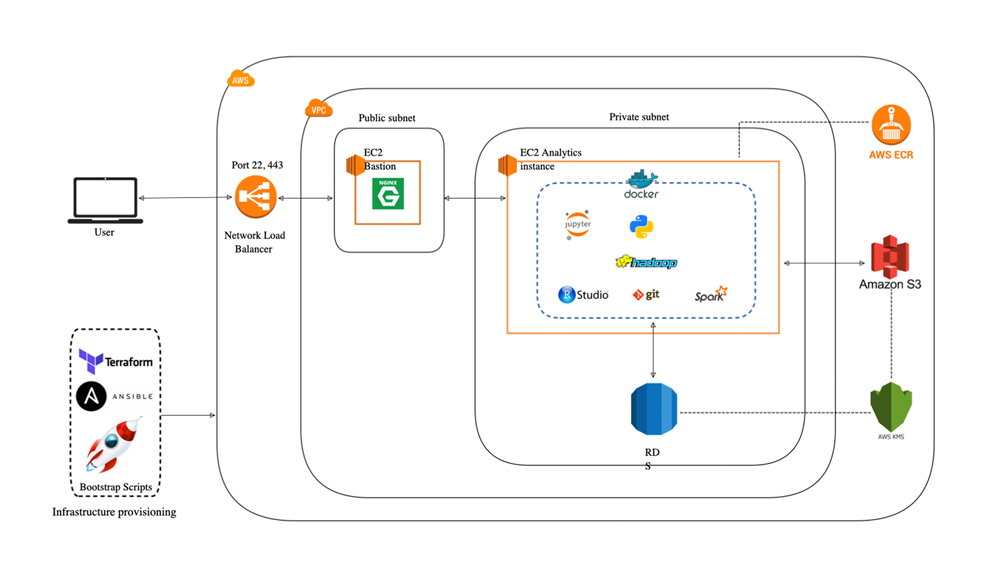

AWS - Analytics Automation

Organizations are experiencing a proliferation of data. This data includes logs, sensor data, social media data, and transactional data, and resides in the cloud, on premises, or as high-volume, real-time data feeds. It is increasingly important to analyze this data: stakeholders want information that is timely, accurate, and reliable. This analysis ranges from simple batch processing to complex real-time event processing. Automating workflows can ensure that necessary activities take place when required to drive the analytic processes.

With Amazon Simple Workflow (Amazon SWF), AWS Data Pipeline, and, AWS Lambda, you can build analytic solutions that are automated, repeatable, scalable, and reliable. In this post, I show you how to use these services to migrate and scale an on-premises data analytics workload.

Workflow basics

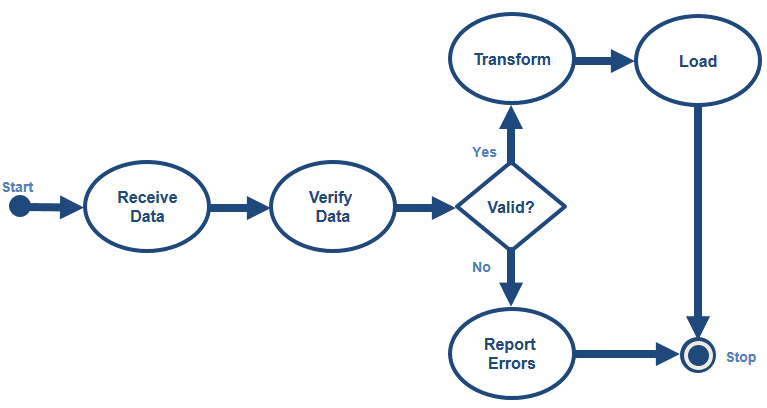

A business process can be represented as a workflow. Applications often incorporate a workflow as steps that must take place in a predefined order, with opportunities to adjust the flow of information based on certain decisions or special cases.

The following is an example of an ETL workflow:

A workflow decouples steps within a complex application. In the workflow above, bubbles represent steps or activities, diamonds represent control decisions, and arrows show the control flow through the process. This post shows you how to use Amazon SWF, AWS Data Pipeline, and AWS Lambda to automate this workflow.

Overview

SWF, Data Pipeline, and Lambda are designed for highly reliable execution of tasks, which can be event-driven, on-demand, or scheduled. The following table highlights the key characteristics of each service.

| Feature | Amazon SWF | AWS Data Pipeline | AWS Lambda |

|---|---|---|---|

| Runs in response to | Anything | Schedules | Events from AWS services/direct invocation |

| Execution order | Orders execution of application steps | Schedules data movement | Reacts to event triggers / Direct calls |

| Scheduling | On-demand | Periodic | Event-driven / on-demand / periodic |

| Hosting environment | Anywhere | AWS/on-premises | AWS |

| Execution design | Exactly once | Exactly once, configurable retry | At least once |

| Programming language | Any | JSON | Supported languages |

Let’s dive deeper into each of the services. If you are already familiar with the services, skip to the section below titled “Scenario: An ecommerce reporting ETL workflow.”

Technologies users:

- AWS

- Terraform

- Ansible

- Python

- EC2

- VPC

- RDS

- Docker

- Bash

- S3

- KMS

- Cloudwatch

- NLB

- ECR